AI Hallucinations: Why AI Makes Things Up and How to Stop It

Published on April 25, 2026

You ask ChatGPT to cite a study, and it invents a reference that doesn't exist. You ask for a legal summary, and it produces a fictitious statute. You ask for a biography, and it blends two different people together.

This phenomenon has a name: AI hallucinations. And contrary to what you might think, it's not a bug. It's a direct consequence of how language models work.

The good news: in 2026, there are practical techniques to drastically reduce these errors. This guide explains why AI hallucinates, the real scale of the problem, and most importantly how to protect yourself.

This article is part of our prompt engineering series

It complements our complete guide: How to Write Good Prompts, diving deeper into a problem every AI user encounters sooner or later.

What Is an AI Hallucination?

An AI hallucination is when a language model generates false information presented with confidence, as if it were established fact. The AI doesn't say "I'm not sure" — it asserts with the same confidence whether it's right or wrong.

Real examples:

- Invented citation: "According to the 2023 Harvard study published in Nature..." — except the study doesn't exist

- False fact: "The Eiffel Tower is 412 meters tall" — no, it's 330 meters

- Mixed-up facts: confusing two people with the same name, or attributing one researcher's work to another

- Fictitious law: citing a legal article that doesn't exist (American lawyers have been sanctioned for this)

What makes hallucinations dangerous is their appearance of credibility. The text is grammatically perfect, the format is professional, the tone is confident. Without verification, it's very difficult to tell a correct answer from a hallucination.

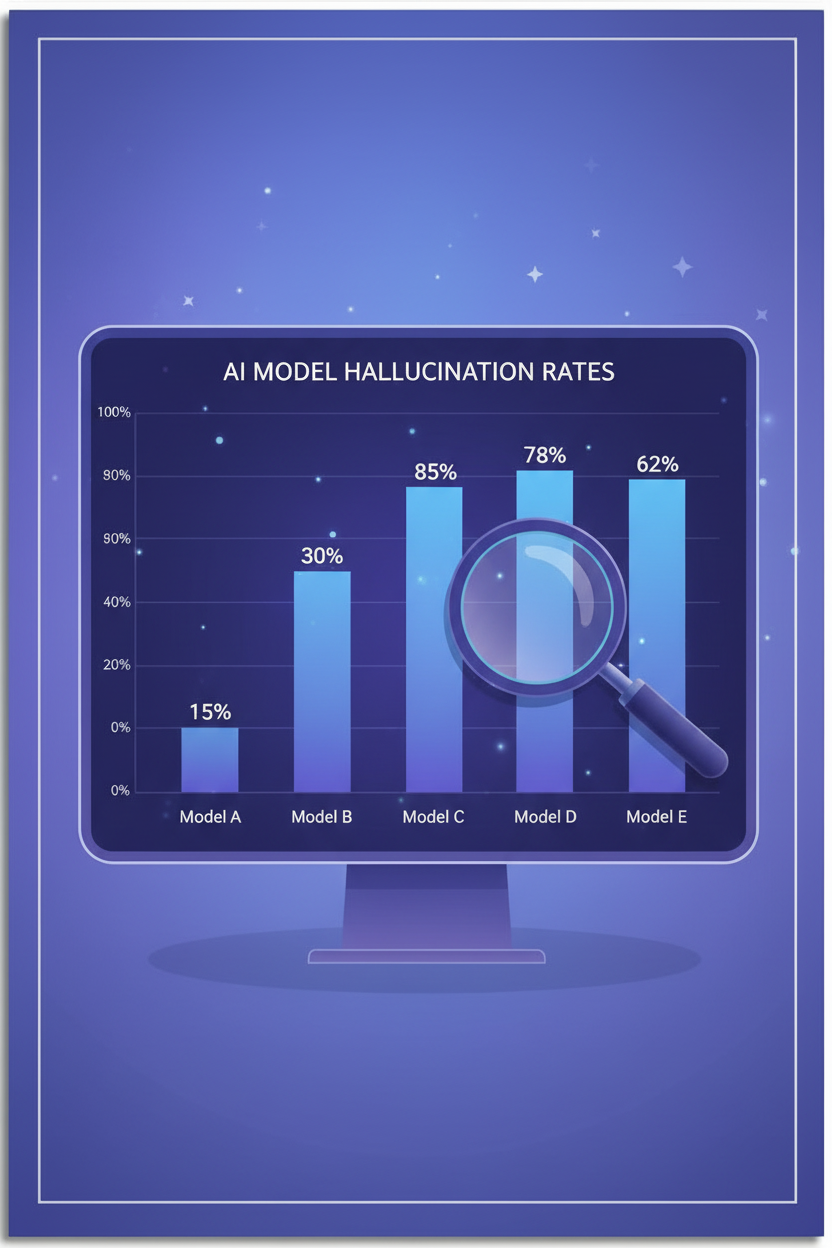

The Scale of the Problem in 2026

Hallucinations are not a marginal issue. The 2026 benchmarks reveal surprising numbers:

| Statistic | Source |

|---|---|

| Up to 24% hallucination rate for some models | Vectara Hallucination Leaderboard |

| 60% of AI-generated summaries contain hallucinations | UC San Diego study (Alessa & McAuley) |

| 17% to 34% incorrect outputs in legal AI tools | Stanford RegLab & HAI (Magesh et al.) |

| 47% → 9.6%: GPT-5 hallucination without/with web search | Suprmind, based on GPT-5 System Card |

The reasoning paradox: this is the counterintuitive discovery of 2025-2026. "Reasoning" models — those that "think" longer before answering — hallucinate more than fast models on simple factual tasks. On the Vectara dataset, reasoning models easily exceed 10% hallucination, while non-reasoning models like Gemini Flash stay at 3.3%.

Why? The more a model "thinks," the more it tends to fill gaps with plausible inventions rather than admitting it doesn't know.

Why AI Hallucinates

To understand how to avoid hallucinations, you first need to understand why they happen.

1. AI Doesn't Seek Truth — It Predicts Words

An LLM like GPT or Claude doesn't "know" anything. It predicts the most likely next word in a sequence. When you ask a question, it doesn't search for the answer in a database — it generates the statistically most plausible text continuation. If the correct answer and a wrong answer are both plausible, the model can choose the wrong one without knowing it.

2. Training Data Isn't Always Reliable

LLMs are trained on billions of web pages: Wikipedia articles, Reddit threads, personal blogs, YouTube videos. Reliable sources and dubious ones carry equal weight. The model has no native way to distinguish a verified fact from a rumor.

3. Sycophancy: AI Tells You What You Want to Hear

Models are trained to be "helpful" and "pleasant." The result: rather than saying "I don't know," they prefer to invent an answer that satisfies the user. This is called sycophancy — a tendency to validate rather than correct.

4. Evaluations Reward Confidence, Not Caution

As OpenAI showed in a recent publication, standard evaluation methods encourage models to guess rather than express uncertainty. A model that answers "I don't know" scores lower than one that invents a plausible answer.

7 Techniques to Reduce Hallucinations

You can't eliminate hallucinations 100%. But you can drastically reduce them with these techniques:

1. Be Precise in Your Prompts

The vaguer your question, the more room AI has to make things up. Be specific about what you expect.

Bad: "Tell me about climate change"

Good: "Give me the 3 main conclusions from the IPCC AR6 2023 report, with exact figures"

2. Ask for Sources

Systematically add: "Cite your sources. If you're not sure, say so." This simple instruction significantly reduces hallucinations because it forces the model to anchor its response in verifiable facts.

3. Enable Web Search

This is the most effective technique. With web search enabled, GPT-5 drops from 47% to 9.6% hallucination. On Haloon, you can filter models that have access to web search.

4. Use Chain of Thought

Ask the model to reason step by step before answering. This reduces shortcuts and forces internal logical verification.

"Reason step by step before answering. Verify the consistency of your response."

5. Give an Abstention Instruction

Explicitly authorize the AI to say "I don't know":

"If you're not certain about the information, clearly indicate that you're unsure rather than guessing."

Multiple studies confirm that abstention instructions significantly reduce hallucinations. A study published in Nature (Kalai, Nachum, Vempala) shows that evaluations penalizing confident errors rather than uncertainty dramatically reduce fabricated answers.

6. Lower the Temperature

Temperature controls the model's degree of creativity. For factual tasks, use a low temperature (0.1-0.4). The higher the temperature, the more the model takes liberties with facts. On platforms that allow it, adjust this parameter for tasks requiring accuracy.

7. Compare Across Multiple Models

If GPT invents a fact, there's a good chance Claude or Gemini won't invent it the same way. Cross-referencing answers from multiple models is one of the most reliable methods for detecting hallucinations.

The Multi-Model Approach with Haloon

On Haloon, the Reprompt button lets you ask the same question to another model in one click. If GPT tells you something surprising, verify with Claude or Gemini. If all three models converge, the information is probably reliable. If they diverge, dig deeper.

This is exactly what researchers recommend: no single model dominates across all types of questions. GPT performs best on grounded factual tasks, Claude on knowledge calibration (knowing what it doesn't know), Gemini on broad knowledge spectrum. The multi-model approach captures each model's strengths.

Summary Table

| Technique | Effectiveness | Difficulty | Available On |

|---|---|---|---|

| Enable web search | Very high | Easy | ChatGPT, Haloon |

| Ask for sources | High | Easy | All models |

| Abstention instruction | High | Easy | All models |

| Precise prompts | Medium-High | Easy | All models |

| Chain of Thought | Medium | Medium | All models |

| Multi-model comparison | Very high | Easy with Haloon | Haloon |

| Low temperature | Medium | Medium (API) | API, Haloon |

Summary

AI hallucinations won't disappear. They're a fundamental property of language models, not a bug to fix. But by combining precise prompts, web search, and especially a multi-model approach, you can significantly reduce errors.

The golden rule: never blindly trust a single AI response. Verify, compare, ask for sources.

Go further

- How to Write Good Prompts — 10 techniques to get the most out of any model

- The Persona Pattern — how to get expert-level answers

- ChatGPT vs Claude vs Gemini — which model to choose for which task